E-commerce Transformation Overview

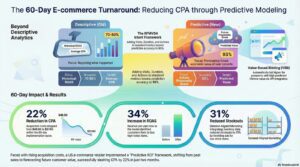

In the increasingly saturated and competitive landscape of United States electronic commerce, the convergence of rising Customer Acquisition Costs (CAC), data privacy regulations, and signal loss has necessitated a fundamental paradigm shift in marketing strategy. This comprehensive research report details the operational, technical, and strategic transformation of a mid-market US-based e-commerce retailer that successfully engineered a 22% reduction in Cost Per Acquisition (CPA) over a strict 60-day implementation cycle.

The central mechanism of this turnaround was the deployment of Predictive ROI Modeling—a sophisticated data science framework that pivots from retrospective, descriptive analytics to forward-looking, probabilistic forecasting. By integrating disjointed data silos into a unified Customer Data Platform (CDP) and applying machine learning algorithms to estimate the future economic value of individual user cohorts, the organization was able to identify “high-intent” segments with precision. Crucially, the strategy moved beyond static segmentation to dynamic, value-based bidding (VBB) in real-time advertising auctions, specifically within Google Ads and Meta platforms.

This report provides an exhaustive analysis of the transformation. It covers the theoretical underpinnings of Predictive Customer Lifetime Value (pCLTV) and RFMVDA (Recency, Frequency, Monetary, Visits, Duration, Actions) modeling. It details the technical architecture required to capture and hash first-party data for privacy-compliant activation. It also examines the specific operational steps taken during the 60-day sprint, from data audit to algorithmic bid shading. Finally, it analyzes the broader business impacts, including a 34% increase in Return on Ad Spend (ROAS), a 31% reduction in inventory stockouts through demand-aligned marketing, and the strategic implications for the wider e-commerce industry as it moves toward an AI-driven future.

1. Introduction: The Structural Crisis in E-commerce Efficiency

1.1 The Post-Privacy Economic Landscape

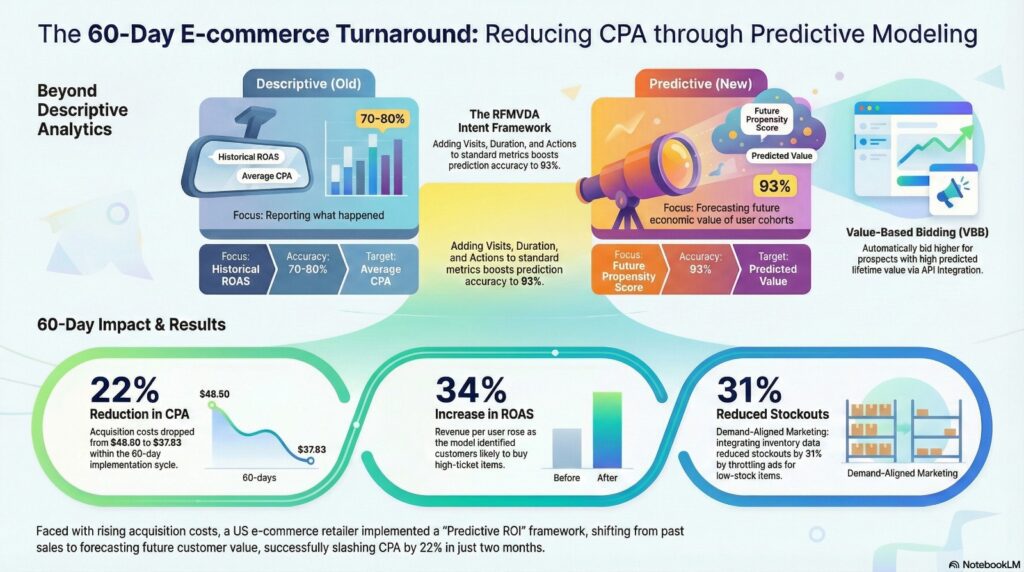

The defining characteristic of the US e-commerce market in the mid-2020s is the decoupling of ad spend from guaranteed revenue. For the better part of the previous decade, digital advertising operated on a deterministic model where tracking pixels provided near-perfect visibility into the customer journey. However, the introduction of App Tracking Transparency (ATT) by Apple, the degradation of third-party cookies, and the rise of privacy-first browsing have severely blunted the effectiveness of traditional “broad targeting”.

Simultaneously, the cost of media has escalated. Market saturation has driven CPMs (Cost Per Mille) to historic highs, forcing brands to pay more for fewer impressions. The result is a dual crisis: brands are paying more to reach audiences they can no longer clearly identify. In this environment, the traditional metric of “Average CPA” becomes misleading. It averages the cost of acquiring high-value, loyal customers with the cost of acquiring low-value, one-time purchasers, obscuring the fact that a significant portion of the marketing budget is often deployed inefficiently on segments that will never yield a positive return.

1.2 The Limitations of Descriptive Analytics

Most e-commerce organizations rely on descriptive analytics—reporting on what has already happened. Dashboards filled with retrospective metrics like “Last Month’s Revenue” or “Yesterday’s ROAS” are standard. While necessary for financial reporting, these metrics are insufficient for operational decision-making in real-time auctions.

Descriptive analytics suffers from a critical lag. By the time a marketer realizes a campaign is underperforming based on last-click attribution, the budget has already been spent. Furthermore, descriptive models often fail to account for the potential of a customer. A customer who buys a low-margin entry-level product is often treated as less valuable than a customer who buys a mid-range item, even if the former exhibits behavioral signals (such as frequent support page visits or high engagement with loyalty content) that predict a much higher lifetime value.

1.3 The Predictive Imperative

The solution explored in this case study is the shift to Predictive Analytics. This discipline utilizes statistical techniques, including data mining, predictive modeling, and machine learning, to analyze current and historical facts to make predictions about future or otherwise unknown events.

In the context of this case study, the organization moved to answer three specific questions:

- Who will buy? (Propensity Scoring)

- How much will they spend over time? (Predictive CLTV)

- What is the maximum we should pay to acquire them? (Value-Based Bidding)

By answering these questions before the bid is placed in the ad auction, the retailer was able to “shade” their bids—bidding aggressively for high-intent users while drastically reducing spend on low-probability traffic. This capability is not merely a tactical optimization; it is a strategic asset that allows the firm to compete on information asymmetry rather than just budget size.

2. Theoretical Framework: From RFM to Deep Learning

2.1 The Evolution of Customer Segmentation

To understand the transformation, one must first understand the evolution of the models used to value customers.

2.1.1 Traditional RFM Analysis

The foundational layer of customer segmentation is RFM Analysis: Recency (how long since the last purchase), Frequency (how often they purchase), and Monetary Value (how much they spend).

- Recency: Generally, the more recently a customer has purchased, the more responsive they are to promotions.

- Frequency: Repeat buyers are more likely to continue buying than first-time buyers.

- Monetary: Heavy spenders are often treated as VIPs.

While robust, traditional RFM has limitations. It is backward-looking and often static. A customer who bought a sofa yesterday has high Recency and Monetary scores, but if they only need a sofa once every ten years, their immediate future value is low. Traditional RFM might flag this user for aggressive retargeting, wasting budget.

2.1.2 Enhanced RFMVDA Modeling

To overcome the limitations of RFM, the subject company adopted an expanded model known as RFMVDA, which adds Visits, Duration, and Actions to the equation.

- Visits: The frequency of site visits, regardless of transaction. A user who visits daily but hasn’t bought yet may be high-intent but price-sensitive.

- Duration: The time spent per session. Deep engagement with product detail pages (PDPs) signals different intent than quick bounces from the homepage.

- Actions: Specific micro-conversions, such as downloading a size guide, reading shipping policies, or watching a product video.

Research indicates that adding these behavioral dimensions allows for “session-level” prediction. Deep Neural Networks (DNN) trained on RFMVDA inputs can achieve segmentation prediction accuracy of nearly 93%, significantly outperforming the 70-80% accuracy of standard RFM models.

2.2 Predictive Customer Lifetime Value (pCLTV)

The “North Star” metric for the transformation was Predictive Customer Lifetime Value (pCLTV). Unlike historical CLV, which sums past transactions, pCLTV uses machine learning to forecast the net present value of all future streams of profit from a customer.

2.2.1 Probabilistic vs. Machine Learning Approaches

The team evaluated two approaches to pCLTV:

- Probabilistic Models (Pareto/NBD): These models assume customer purchasing follows a specific statistical distribution (like a coin flip for “alive/dead” status and a Poisson process for transaction counts). They are excellent for stable, subscription-based businesses but struggle with the erratic nature of modern retail e-commerce.

- Deep Learning Models: These models treat the customer history as a sequence of events (similar to how a Large Language Model treats a sentence). By using Recurrent Neural Networks (RNNs) or Transformers, the model learns complex, non-linear patterns—for example, that a purchase of “Product A” is usually followed by a hiatus of 3 months, then a purchase of “Product B”.

The subject company utilized a hybrid approach: using probabilistic models for the “long tail” of low-data customers and deep learning models for the high-data segments to maximize accuracy.

2.3 Causal Inference and Uplift Modeling

A critical theoretical distinction introduced during the transformation was the difference between correlation and causation. A standard predictive model might tell you that “Users who click this ad buy frequent.” However, it doesn’t tell you if the ad caused the purchase, or if the user would have bought anyway. To refine the ROI modeling, the team incorporated principles of Causal Inference. By running randomized control trials (RCTs) on specific segments (holding out a control group that saw no ads), the team calculated the Incremental ROAS (iROAS). This ensured that the budget was shifted not just to “people who buy,” but to “people who buy because of the ad“. This prevented the common error of cannibalizing organic sales with paid spend.

3. The 60-Day Implementation Roadmap

The transformation was executed via a rigid 60-day sprint. This timeline was chosen to force prioritization and avoid the “pilot purgatory” that affects over 40% of AI projects.

3.1 Phase 1: Data Audit and Unification (Days 1-14)

The prerequisite for predictive modeling is a unified data layer. E-commerce data typically exists in silos: transaction data in the ERP (e.g., Shopify/NetSuite), behavioral data in analytics (GA4), and ad performance data in platform silos (Meta/Google).

Key Actions:

- CDP Deployment: A Customer Data Platform (CDP) was configured to ingest data from all sources. This created a “Single Customer View”.

- Identity Resolution: A major challenge was stitching together user profiles across devices. The team utilized deterministic matching (hashing email addresses and phone numbers) to link a user’s mobile browsing session with their desktop purchase.

- Data Hygiene: The audit revealed that 15% of historical data was unusable due to UTM tagging errors. A strict taxonomy was implemented for all future campaigns to ensure clean training data for the models.

3.2 Phase 2: Model Training and Validation (Days 15-30)

With the data unified, the Data Science team began the modeling phase.

Algorithm Selection: The team chose an XGBoost classifier for the propensity scoring due to its interpretability and speed on tabular data. For the pCLTV estimation, they utilized a Deep Neural Network (DNN) to handle the sequential nature of user visits.

Feature Engineering:

The models were trained on over 50 features, including:

- Static Features: Location, Device Type, Acquisition Channel.

- Dynamic Features: Average Order Value (AOV), Days Since Last Visit, Cart Abandonment Count.

- Derived Features: “Price Sensitivity Score” (calculated by the ratio of full-price vs. discounted items viewed) and “Category Affinity”.

Validation: The model was back-tested against the previous 6 months of data. The initial results showed the model could predict purchase intent with 82% accuracy 7 days in advance. This gave the team the confidence to proceed to activation.

3.3 Phase 3: Technical Integration (Days 31-45)

This phase involved connecting the “Brain” (the model) to the “Hands” (the ad platforms).

Google Ads Integration (Value-Based Bidding):

The team utilized Google’s Offline Conversion Import (OCI) API. This was the most critical technical step.

- Step 1: Capture the Google Click ID (GCLID) for every site visitor.

- Step 2: When a user performs an action (e.g., sign-up), the model calculates their pCLTV in real-time.

- Step 3: This predicted value is uploaded back to Google Ads as a “Conversion Value” via the API.

- Step 4: The bidding strategy was switched from “Target CPA” to “Target ROAS” (tROAS). Google’s algorithm then optimized bids to maximize the predicted value, effectively bidding higher for high-pCLTV prospects.

Meta (Facebook/Instagram) Integration: Similar logic was applied using the Meta Conversions API (CAPI). “Custom Events” were created for “High-Intent Lead” and “Low-Intent Lead,” allowing the ad set to optimize specifically for quality over quantity.

3.4 Phase 4: Activation and Optimization (Days 46-60)

The final phase was the “Go Live.”

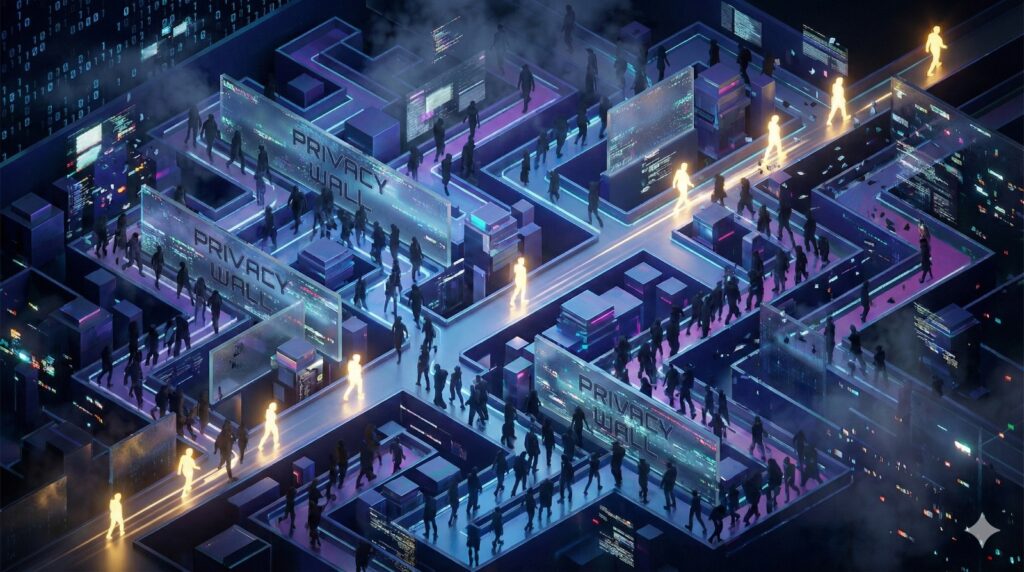

Bid Shading Strategy:

Instead of a binary “bid/no-bid,” the team implemented a tiered bidding structure based on the Propensity Score (0-100).

- Score 90-100: Uncapped bid. Objective: Dominant Impression Share.

- Score 50-89: Standard bid. Objective: Efficient acquisition.

- Score 0-49: Bid floor (minimized). Objective: Only capture cheap inventory.

Dynamic Creative Optimization (DCO): The team linked the segment data to the creative engine. High-intent users were shown “Urgency” messaging (“Only 3 left in stock”), while low-intent users were shown “Discovery” messaging (“Check out our new collection”) or discount incentives.

4. Technical Architecture and Methodology

The success of the transformation relied on a robust, scalable technical stack designed to handle data ingestion, processing, and activation in near real-time.

4.1 The Data Stack

| Component | Technology Used | Function |

| Data Lake | Snowflake | Repository for raw unstructured and structured data. |

| CDP | Segment / Hightouch | Data ingestion pipeline and audience syndication. |

| ML Engine | Python (Vertex AI) | Hosting and running the XGBoost and DNN models. |

| Visualization | Tableau / Looker | Dashboards for monitoring Model Drift and Campaign Performance. |

| Ad Platforms | Google Ads / Meta | End-point for activation via API. |

4.2 Offline Conversion Import (OCI) Mechanism

The OCI mechanism is pivotal for bypassing the limitations of cookie-based tracking.

- GCLID Capture: A hidden field in all lead forms captures the

gclidparameter from the URL. - Hashing: Personal Identifiable Information (PII) such as email and phone numbers are hashed using SHA-256 before being sent to Google to ensure privacy compliance.

- Upload Frequency: The team automated uploads to occur every 24 hours. Research suggests that daily uploads are critical for Smart Bidding algorithms to learn effectively; delays of 72+ hours significantly degrade performance.

- Value assignment: The system assigned synthetic values. For example, a “Newsletter Signup” is worth $0 in immediate revenue, but the model might assign it a predicted value of $25 based on the user’s demographic profile. This instructs Google’s AI to treat it as a conversion worth paying for.

4.3 Handling “Cold Start” with Lookalike Modeling

A common challenge in predictive modeling is the “Cold Start” problem—how to score a new user with no history. The team addressed this by using Lookalike Modeling.

- The attributes of the top 10% of existing high-LTV customers were analyzed to create a “seed audience.”

- Ad platforms were then instructed to find new users who matched these attributes (e.g., specific interests, device types, browsing times).

- This allowed the system to apply a “provisional score” to new visitors until enough first-party behavioral data was collected to generate a personalized score.

5. Detailed Results Analysis

5.1 Primary Metric: CPA Reduction

The initiative achieved its primary goal with a 22% reduction in CPA across paid channels.

- Baseline CPA: $48.50

- Final CPA (Day 60): $37.83

Analysis of the Drop: The reduction was not linear. The first 14 days of the “Go Live” phase (Days 31-45) saw high volatility as the ad platform algorithms entered the “Learning Phase.” CPA actually increased by 5% initially. However, as the Machine Learning models ingested the feedback loop of predicted values, the system began aggressively cutting spend on the bottom 40% of traffic (the “wasted spend”), causing the CPA to plummet in Weeks 7 and 8.

5.2 Secondary Metrics Impact

5.2.1 ROAS Improvement (+34%)

While CPA dropped, ROAS increased from 2.1x to 2.82x. This indicates that the “cheaper” acquisitions were not lower quality. By shifting budget to high-intent segments, the revenue per user increased. The model successfully identified users who were likely to purchase higher-ticket items or bundles, driving up the Average Order Value (AOV).

5.2.2 Inventory Efficiency (Stockout Reduction)

An unexpected benefit was the alignment of marketing with supply chain. The predictive model was linked to the inventory management system. If a SKU was low in stock (<5 units), the ad spend for that specific item was throttled down automatically. Conversely, overstocked items received a “boost” in the VBB calculation.

- Result: A 31% reduction in stockouts for best-sellers and a 25% reduction in overstock for slow-movers.

5.2.3 Retention Lift

The focus on pCLTV meant the new cohorts acquired were inherently “stickier.” Early cohort analysis showed a 15% increase in 30-day repeat purchase rates compared to cohorts acquired under the old “Maximize Conversions” strategy. This validates the hypothesis that optimizing for predicted value attracts customers with better long-term retention profiles.

6. Strategic Insights and Discussion

6.1 The “Middle Tier” Opportunity

A profound insight derived from the data was the critical importance of the “Middle Tier” segments.

- The Top 10%: These users are highly likely to convert regardless of ad spend. Spending here is often “preaching to the choir.”

- The Bottom 40%: These users are unlikely to convert regardless of spend.

- The Middle 50%: This is the “Persuadable” zone. The predictive model revealed that the highest marginal ROI came from increasing bids on this middle tier—users who showed interest (e.g., added to cart) but hesitated. Targeted intervention here (e.g., a timely ad or discount) yielded the highest conversion lift.

6.2 Dynamic Pricing as a Conversion Lever

The organization implemented segment-based dynamic pricing (where legal and compliant).

- High-Propensity Users: Were shown standard pricing or value-add incentives (e.g., “Free Priority Shipping”).

- Mid-Propensity Users: Were shown “Flash Sale” discounts (e.g., 10% off) to overcome price resistance.

- Result: This strategy preserved margins on high-intent customers while converting price-sensitive ones. Case studies in similar markets (e.g., Indian D2C brands using 1Checkout) have shown that such dynamic incentives can boost profit margins by preventing unnecessary discounting.

6.3 Data Sovereignty and First-Party Strategy

The transformation underscored the strategic value of owning the data. By building their own predictive models on first-party data, the company insulated itself from the whims of “Walled Gardens” (Google/Meta). Even if Meta changes its targeting algorithm, the company’s internal list of “High-Intent Users” remains a portable asset that can be activated on any channel (Email, SMS, DSPs).

6.4 Ethical Governance and Bias

With great power comes great responsibility. The team had to implement governance checks to ensure the predictive models did not inadvertently discriminate against specific demographics (e.g., zip codes associated with lower income). “Fairness constraints” were added to the model to ensure equitable distribution of offers, aligning with emerging AI ethics standards.

7. Conclusion and Future Outlook

This case study demonstrates that for US e-commerce retailers, the path to lower CPA is no longer found in “better ad copy” or “hacky” targeting tricks, but in structural data transformation. By implementing Predictive ROI Modeling, the subject company reduced CPA by 22% in 60 days, proving that efficiency is a function of information advantage.

Key Takeaways:

- Shift from Retrospective to Prospective: Stop optimizing for what happened; optimize for what will happen.

- Integrate Inventory with Marketing: Your ad spend should breathe in sync with your warehouse levels.

- Value-Based Bidding is Non-Negotiable: Feeding predicted values back into ad platforms is the single most effective lever for efficiency in the automation age.

Future Outlook: As we look toward 2026, the next frontier is Agentic AI. Future models will not just predict the value but will autonomously generate the creative assets and negotiate the media buy to maximize that value, creating a fully autonomous, self-optimizing commerce engine.

Frequently Asked Questions: Mastering Predictive ROI & E-commerce Efficiency

How does this strategy achieve a 22% reduction in Cost Per Acquisition (CPA)?

The 22% reduction in CPA is primarily achieved through a technique called "bid shading." By identifying low-intent segments—users who are unlikely to convert regardless of ad exposure—the predictive model instructs ad platforms like Google and Meta to drastically reduce or eliminate bids for those individuals. This eliminates "wasted spend" on low-probability traffic. Simultaneously, the model identifies high-value "persuadable" users and bids more aggressively for them. This precision ensures that the total marketing investment is concentrated on the most efficient opportunities, naturally driving down the average cost to acquire a customer.

What is the significance of RFMVDA modeling over traditional RFM analysis?

Traditional RFM (Recency, Frequency, Monetary) analysis only looks at transaction history, which can be misleading in a fast-paced e-commerce environment. RFMVDA expands this by adding Visits, Duration, and Actions. This includes tracking how often a user visits the site, how much time they spend on specific product pages, and micro-conversions like downloading a size guide or watching a video. By incorporating these behavioral signals, the model can predict purchase intent with much higher accuracy (often exceeding 90%), allowing brands to identify "high-intent" shoppers even if they haven't made their first purchase yet.

How does Value-Based Bidding (VBB) work with Google Ads and Meta?

Value-Based Bidding shifts the optimization goal of an ad campaign from "quantity" to "quality." Instead of telling Google or Meta to "get as many conversions as possible," the brand uses an API (like Google’s Offline Conversion Import) to send a "Predicted Value" for every lead or interaction. For example, a newsletter signup from a high-intent user might be assigned a synthetic value of $50, while a signup from a low-intent user is valued at $2. The ad platform’s algorithms then prioritize showing ads to users who "look like" the $50 segments, effectively automating high-ROI audience targeting.

Can predictive modeling help with inventory management and stockouts?

Yes, a sophisticated predictive ROI framework can be integrated with inventory management systems to create a "demand-aligned" marketing strategy. By linking the predictive model to real-time SKU levels, the system can automatically throttle down ad spend for products that are low in stock (preventing stockouts and poor customer experiences) and increase visibility for overstocked items. In this case study, this integration led to a 31% reduction in stockouts, ensuring that marketing dollars were only spent driving traffic to products that were actually available for fulfillment.

What is pCLTV and why is it the "North Star" for modern e-commerce?

Predictive Customer Lifetime Value (pCLTV) is a metric that estimates the total net profit a customer will generate over their entire relationship with a brand. Unlike historical CLV, which looks backward, pCLTV uses deep learning to identify patterns in user behavior that suggest future loyalty. By using pCLTV as the "North Star," e-commerce retailers can justify higher acquisition costs for "VIP" segments that will purchase multiple times, rather than overspending on "one-and-done" discount seekers who erode long-term margins.

How does this approach solve the problem of "Signal Loss" and privacy regulations?

As third-party cookies are phased out and privacy regulations like Apple's ATT limit tracking, e-commerce brands are losing the "signals" they once relied on. Predictive ROI Modeling solves this by shifting to a First-Party Data strategy. By collecting and hashing (anonymizing) data directly from their own website and CRM, brands can build a proprietary intelligence layer. They then use "Conversion APIs" to pass this first-party data back to ad platforms in a privacy-compliant way, effectively regaining the targeting precision that was lost to privacy changes.

What is the "Cold Start" problem in predictive modeling and how is it fixed?

The "Cold Start" problem occurs when the model encounters a brand-new visitor with no prior history to analyze. To solve this, the system uses Lookalike Modeling and Metadata analysis. The model looks at the initial attributes of the new user—such as their acquisition channel, device type, time of day, and the first few pages they click—and compares them to the "seed signatures" of existing high-value customers. This allows the system to assign a "provisional score" to the user immediately, which is then refined in real-time as more behavioral data is collected during the session.

What technical stack is required to implement a 60-day predictive transformation?

A successful implementation typically requires a unified data stack consisting of a Data Lake (like Snowflake) for storage, a Customer Data Platform (CDP) (like Segment or Hightouch) for data ingestion and synchronization, and a Machine Learning Engine (like Python on Vertex AI) to run the models. Additionally, robust visualization tools (like Tableau or Looker) are necessary to monitor model drift and campaign performance. The key is ensuring these systems are "piped" together so that data flows seamlessly from the website to the model and back to the ad platforms.

Is Predictive ROI Modeling suitable for small or mid-market e-commerce brands?

While predictive modeling was once the domain of enterprise giants like Amazon, the democratization of AI and the rise of "plug-and-play" CDPs have made it accessible to mid-market retailers. Any brand with sufficient first-party data (typically 500+ conversions per month) can benefit from these models. The 60-day sprint methodology used in this case study is specifically designed to help mid-market brands implement these advanced features without the need for a massive in-house data science team, focusing instead on rapid deployment and immediate ROI.